I also tried that, but have the same above issues that I mentioned: 1) the performance does not yield to that of setting without gradient-checkpointing. That’s an argument that is specified in BertConfig and then the object is passed to om_pretrained. I also noticed that there’s a recently implemented option in Huggingface’s BERT which allows us to apply gradient checkpointing easily.

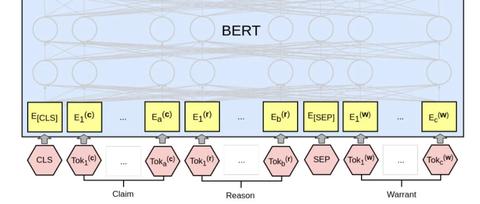

This Notebook has been released under the Apache 2.0 open source license. #BERT FINETUNE UPDATE#Isn’t that true argument, wherever we have learning (i.e., the update of model parameters), we can use gradient checkpointing? Jigsaw Unintended Bias in Toxicity Classification. Fine-tuning is brittle when following the recipe from Devlin et al. However, it’s important to not use just the final layer, but at least the last 4, or all of them. Upon my investigations, I noticed that this part of the model consumes much of a memory, so that I thought it’d be better to checkpoint it. Instead, the feature-based approach, where we simply extract pre-trained BERT embeddings as features, can be a viable, and cheap, alternative. I a sense, the weights associated with this class should be updated (i.e., learned) during training. I see top_vec as a vector that has the encoded version of vector x (i…e, src) by the BERT. Yes, this model is just part of a larger network, i.e., top_vec which is the output of this model is being used by another model.

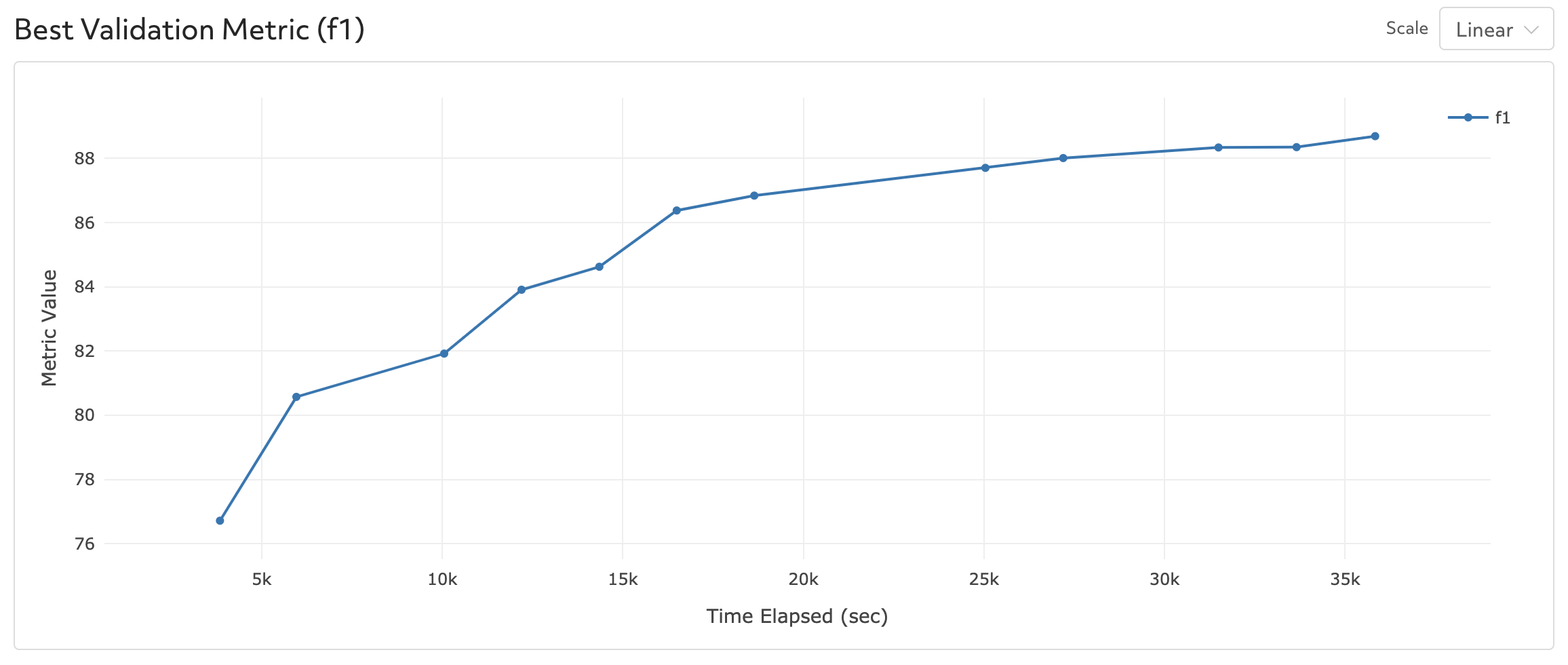

Is there any problem with the implementation? or have I done any part thanks for your response! Another observation: while checkpointing, the model’s training speed also increases considerably which is totally odd to what I have learned from gradient checkpointing. Top_vec, _ = self.model(x, attention_mask=mask, token_type_ids=segs)Īs I’m checkpointing the BERT’s forward function, the memory usage drops significantly (~1/5), but I’m getting relatively inferior performance compared to non-checkpointing, in terms of the metrics (for my task, which is summarization) that I’m calculating on the validation set. Top_vec, _ = self.model(x.long(), attention_mask=mask.long(), token_type_ids=segs.long()) Output = module(inputs, attention_mask=inputs, token_type_ids=inputs) Self.finetune = finetune # either the bert should be finetuned or not. Self.model = om_pretrained('allenai/scibert_scivocab_uncased', cache_dir=temp_dir) #BERT FINETUNE CODE#I’m skeptical if I’m doing it right, though! Here is my code snippet wrapped around the BERT class: class Bert(nn.Module):ĭef _init_(self, large, temp_dir, finetune=False): I’m trying to apply gradient checkpointing to the huggingface’s Transformers BERT model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed